Excel And Big Data

Excel does not only have the ability to handle small data but also very big data as well. Big data can be described as data that has a high variety, high velocity or high volume. High variety entails huge shape of data which changes quickly over a period of time. High velocity entails that the data arrives very fast requiring a lot of updates and changes. High volume entails a huge dimension of data with a lot of items. Furthermore, big data requires novel value of business, innovative analysis types and processing that is cost effective.

Big data and the role of Excel

There are various technology that can be used to manipulate big data: custom application from operation analytics, vertical solutions that are pre-built, exploratory and ad-hoc analysis, processing, capture, infrastructure and storage.

A major case is the ad hoc/exploratory analysis. In this case, analysts love to use the tools for analysis they are most comfortable with to get great knowledge about the sets of data they receive. In most cases, their usage go beyond variety, velocity and volume as they also want to be able to give queries to the data, so as to get prescriptive and predictive experiences as well as get social feeds and other unstructured data.

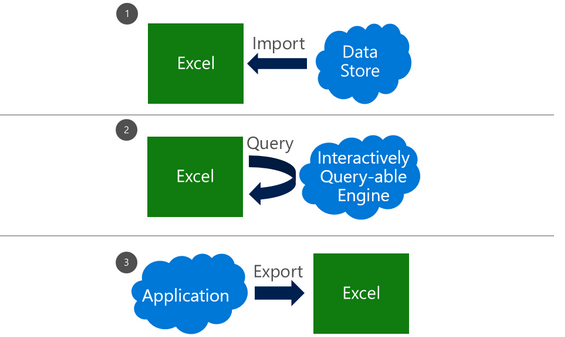

There are broadly 3 ways external data can be manipulated in excel, with each way having the cases where they can be used as well as dependency sets. All of these can however be carried out on 1 workbook.

How to import data

A lot of customers import data from outside by using a connection as a snapshot that is refreshable. A document that is self-contained is subsequently created, which can be worked on, even when there is no network. It is also possible for customers to change the data so that it shows their analytic needs or personal context.

You should however take note of these challenges when importing huge data:

-

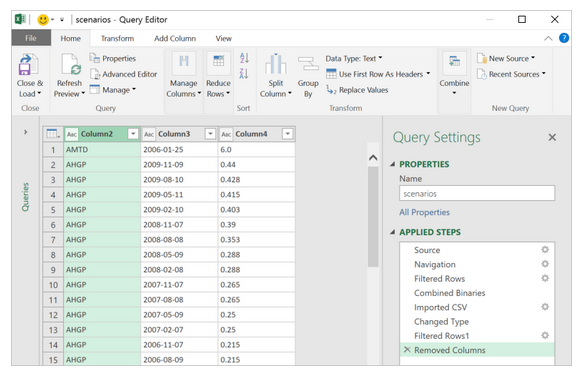

Huge data querying: Sources of data for huge data including HDFS, SaaS as well as relational sources that are large might need special tools in some cases. Power query has a lot of new connectors that has this ability.

-

Data transformation: Every data including huge ones could have some noise. Power query also aids in setting up steps of transformation that would make an auditable, repeatable and coherent data set. This would aid in the creation of a transformed and reliable data set that is clean.

-

Large sources of data handling: It is possible to quickly get fluid data preview with the aid of power query. Queries can subsequently be worked with to be used to filter data subsets.

-

Semi-structured data handling: This is very much required in cases of huge data. Elegant methods are offered by power query towards manipulating very hierarchical data or data that are in many files.

-

Large data volume handling: Data model, which is available in the 2013 version of Microsoft Excel has the ability to manipulate data that requires more than the initial limit of 1 million rows.

External source live query

It is possible for a data model to be upsized to a SQL database Server Analysis Services that is standalone towards supporting live query from external sources.

Export to Excel

It is possible to export, from a lot of other applications, to Excel.